From a raw radar archiveto the flood map on the case page.

Six stages. Six real scripts. Six artefacts on disk. This page walks the exact WSL workflow that produced every image in the Banda Aceh case, ending with a scrubbable view of the training run that built the model itself.

- Students learn what each button in a SAR pipeline actually does, and why the numbers on the case page move.

- Teachers get a ready-made 8-minute walkthrough with copy-pasteable commands and a “check yourself” prompt per stage.

- Reviewers can verify that every image shown on this site is the lossless output of a script linked below.

By the end of this walkthrough you should be able to:

- Name the 5 transformations a raw Sentinel-1 archive goes through before a model sees it.

- Explain why clamp normalization is one of the most sensitive knobs in a SAR flood pipeline.

- Read a pairwise agreement matrix without being fooled by the big number.

- Point at the epoch in a training run that produced the deployed checkpoint — and justify why.

- Python basics (run a script, read an import)

- Know what a convolutional layer is, roughly

- A terminal

- SNAP installed — intermediate TIFs are shipped

- A GPU — the case outputs are pre-computed

- Prior SAR experience — glossary is at the bottom

Five transformations.

Raw radar bytes → a map you can hand to a responder.

Before zooming into any single stage, keep this shape in your head. Every box below is a real file or folder on disk; every arrow is a real script. The stages further down the page zoom into each arrow one at a time.

One dispatcher, five real scripts.

Every stage on this page maps to a concrete file under sar_toolkit/. A single dispatcher (run_banda_aceh_pipeline.py) owns the step→script table, and a small env-var contract pins inputs and outputs without hard-coding WSL paths.

scriptsar_toolkit/run_banda_aceh_pipeline.py

The toolkit runs on a WSL2 Ubuntu box with SNAP, GDAL, PyTorch and a CUDA GPU. Everything is pinned in environment-sar-toolkit.yml. A single env var — ASIA_FLOOD_BASE_DIR — points at the working tree that holds raw SAFE archives, intermediate TIFs, the pickle index, and the checkpoint. Set it once, never edit code paths again.

Each stage below is a first-class script, not a notebook cell. That matters for teaching: students can run one stage, inspect outputs/, then run the next with confidence that nothing upstream is hiding in memory.

- WSL baseASIA_FLOOD_BASE_DIR

/home/yang/asia_flood_base - Python envconda · sar-toolkit

environment-sar-toolkit.yml

- Entry pointpython -m sar_toolkit …

run_banda_aceh_pipeline.py - Stage tableSTEP_TO_SCRIPT = {...}

preprocess · build-dataset · predict · validate · stats

? CHECK YOURSELFWhich single environment variable makes the whole pipeline portable across machines?show hint

</> CODEsee the actual sar_toolkit/run_banda_aceh_pipeline.py excerptshow code

# WSL · activate the toolkit env$ conda activate sar-toolkit $ export ASIA_FLOOD_BASE_DIR=/home/yang/asia_flood_base# Run any one stage in isolation$ python sar_toolkit/run_banda_aceh_pipeline.py predict $ python sar_toolkit/run_banda_aceh_pipeline.py validate # Or reproduce build-dataset → predict → validate in one shot$ python sar_toolkit/run_banda_aceh_pipeline.py reproduce-no-sar# The step table (run_banda_aceh_pipeline.py) STEP_TO_SCRIPT = { "preprocess": "preprocess/snap_preprocess_banda_aceh.py", "build-dataset": "dataset/prepare_dataset_from_three_tifs.py", "stats": "infer/calculate_banda_aceh_stats.py", "predict": "infer/predict_banda_aceh_adapted.py", "validate": "validate/validate_predictions.py", }

Radar bytes → calibrated, terrain-corrected TIFs.

Three raw Sentinel-1A SAFE archives go into SNAP's GPT engine with an explicit graph XML, an external SRTM DEM for geocoding, and a two-step AOI crop. Out come three clean, radiometrically-calibrated, co-registered GeoTIFFs.

scriptpreprocess/snap_preprocess_banda_aceh.py

The graph does Apply-Orbit-File → Calibration → Speckle-Filter → Range-Doppler Terrain-Correction → Subset in one GPT invocation, then a gdalwarp second pass tightens the bounding box so there are no black edges around the coast. The result is three co-registered, calibrated scenes at 10 m resolution that a downstream tiler can slice without further care.

This is also the only stage that needs SNAP. Everything after it is pure PyTorch + rasterio, so a student without SNAP can still reproduce from stage 02 onward using the shipped intermediate TIFs.

- Raw scenes3 × S1A SAFE.zip

20251021 · 20251102 · 20251126 - GraphGRD preprocessing w/ external DEM

preprocess/grd_preprocessing_external_dem.xml - DEMSRTM 1 arc-sec

assets/dem/N05E095.tif

- Processed TIFs3 × VV/VH · WGS84 · LZW

S1A_BandaAceh_<date>_snap_processed_final.tif - AOIlon [95.25, 95.40] · lat [5.45, 5.60]

Banda Aceh coastal strip

? CHECK YOURSELFWhy pass -PexternalDEMFile instead of letting SNAP auto-download the DEM?show hint

</> CODEsee the actual preprocess/snap_preprocess_banda_aceh.py excerptshow code

# preprocess/snap_preprocess_banda_aceh.py (excerpt) SNAP_HOME = Path("/home/yang/snap") GRAPH_FILE = "preprocess/grd_preprocessing_external_dem.xml" AOI_WKT = "POLYGON((95.15 5.35, 95.50 5.35, 95.50 5.70, 95.15 5.70, 95.15 5.35))" FINAL_AOI = { lon_min: 95.25, lon_max: 95.40, lat_min: 5.45, lat_max: 5.60 } DATES = ["20251021", "20251102", "20251126"] # 1) SNAP GPT — calibration, speckle, terrain correction, subset$ gpt preprocess/grd_preprocessing_external_dem.xml \ -PinputFile=S1A_IW_GRDH_20251126.SAFE.zip \ -PoutputFile=S1A_BandaAceh_20251126_snap_processed.tif \ -PgeoRegion="$AOI_WKT" \ -PexternalDEMFile=$DEM/N05E095.tif -e # 2) gdalwarp — precise final crop, kill black edges$ gdalwarp -te 95.25 5.45 95.40 5.60 -te_srs EPSG:4326 \ -r bilinear -co COMPRESS=LZW -co TILED=YES \ in.tif S1A_BandaAceh_20251126_snap_processed_final.tif

3 big TIFs → 224² patches in KuroSiwo format.

The model was trained on KuroSiwo's tile layout, so the scene has to be cut into 224×224 patches with three temporal siblings per location — pre_event_1, pre_event_2, post_event — and an index pickle tying every patch back to its row/col.

scriptdataset/prepare_dataset_from_three_tifs.py · dataset/generate_pickle.py

Each patch folder carries three TIFs and a small info.json with its lon/lat/row/col. The pickle is a fast spatial index the Dataset class uses to stream batches — it's what gets looked up at inference time so we can reassemble predictions back to their geographic positions.

- 3 calibrated TIFsVV + VH · 2-band

outputs/preprocess/processed_sar/ - Patch size224 × 224 px · stride = 224

no overlap, deterministic grid

- Tileskurosiwo_format_v2/999/01/<hash>/

MS1.tif + SL1.tif + SL2.tif + info.json - Indexgrid_dict_banda_aceh.pkl

list of (record, row_idx, col_idx) tuples

? CHECK YOURSELFWhy does each tile ship as three sibling files (MS1.tif / SL1.tif / SL2.tif) instead of one?show hint

</> CODEsee the actual dataset/prepare_dataset_from_three_tifs.py · dataset/generate_pickle.py excerptshow code

# dataset/prepare_dataset_from_three_tifs.py (excerpt) PATCH_SIZE = 224 ACT_ID, AOI_ID = 999, 1 # banda_aceh as a custom "event"# Patch grid over the 2802260-pixel scene (~1672×1676) n_rows, n_cols = ceil(H / 224), ceil(W / 224) # For each patch location, write the KuroSiwo triplet: write_tif("MS1.tif", post_event_patch) # 20251126 · main scene write_tif("SL1.tif", pre_event_1_patch) # 20251102 · approach write_tif("SL2.tif", pre_event_2_patch) # 20251021 · baseline write_json("info.json", { row, col, lon, lat, ... }) # dataset/generate_pickle.py — build the index grid_dict = { (act_id, aoi_id): [ { "info": { "row": r, "col": c, ... }, "path": "999/01/<hash>/" }, ... ] } pickle.dump(grid_dict, "grid_dict_banda_aceh.pkl")

Sample one 224² tile and see what's inside.

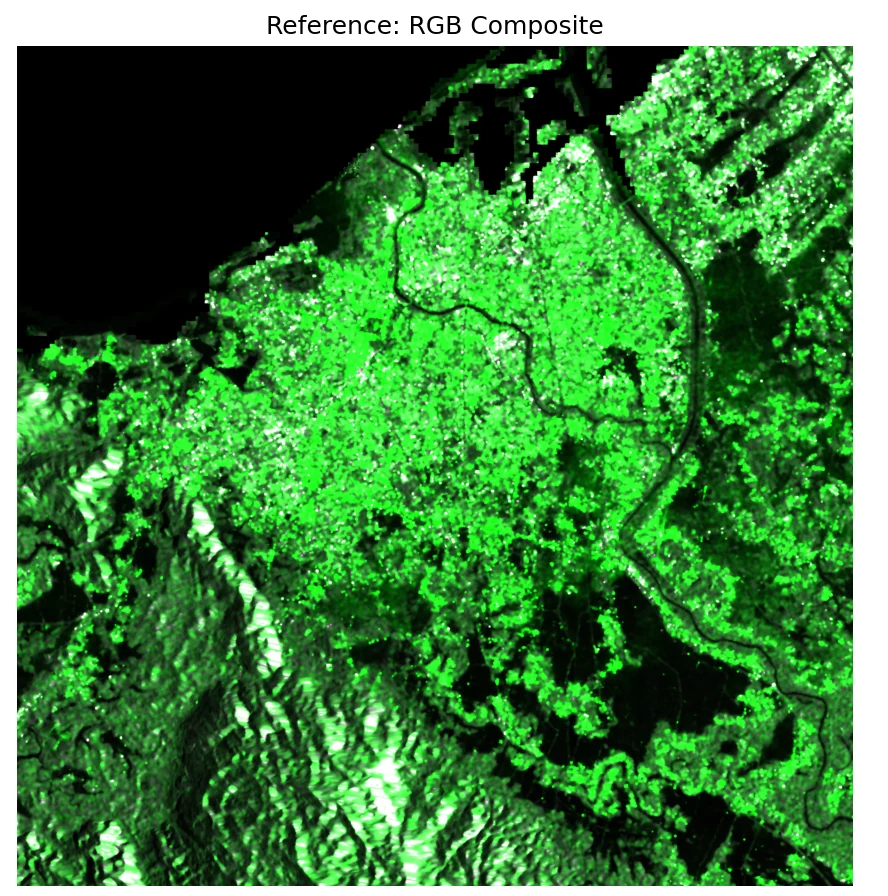

Each KuroSiwo-format tile directory packs 6 GeoTIFFs: VV + VH at three acquisition times — pre-event 1 (21 Oct, baseline), pre-event 2 (2 Nov, approach) and co-event (26 Nov, main flood scene). Press Sample to load a random tile from the …-tile Banda Aceh test split. Each click round-trips to the WSL box in ~1 s.

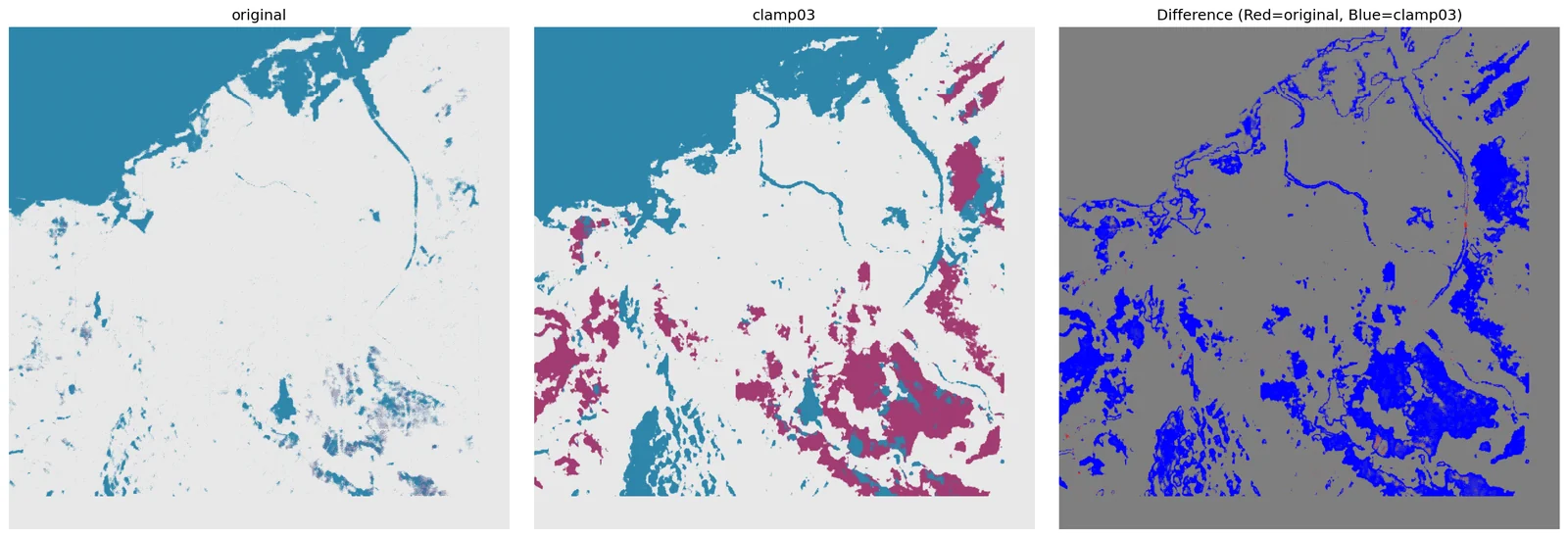

The root cause of the clamp story.

The training set (KuroSiwo) was dominated by scenes where VH backscatter rarely exceeded 0.15. Banda Aceh isn't like that. Before inference, we recompute per-region mean/std at three clamp cut-offs — and the numbers tell you immediately why clamp = 0.3 is the right choice.

scriptinfer/calculate_banda_aceh_stats.py

Two facts drive the whole story on the case page:

- Banda Aceh VH is ~5–8× brighter than the KuroSiwo training mean. If you normalize with the training stats, almost every VH pixel is mapped to "very bright" → the model loses its ability to separate flood from vegetation.

- The clamp itself silently truncates pixels. At 0.15, 71% of VH values are clipped to the ceiling; the model never sees variation in the flooded paddies. At 0.5, only 16% clip, but speckle noise dominates. 0.3 is the sweet spot — and it's the recommendation you see recommended in

CONFIGSon the case page.

- Scenes3 × processed TIFs · VV + VH bands

only pixels > 0 (skip NoData) - Clamps swept[0.15, 0.3, 0.5]

matches training-time, recommended, aggressive

- Stats tableper-clamp VV/VH mean & std

configs/banda_aceh_adapted_configs.json - FindingVH truncation: 71% @ 0.15 → 35% @ 0.3 → 16% @ 0.5

Banda Aceh VH is 7× brighter than KuroSiwo mean

? CHECK YOURSELFIf VH at Banda Aceh is 5× brighter than the training mean, why does clamping VH to 0.15 hurt flood detection?show hint

</> CODEsee the actual infer/calculate_banda_aceh_stats.py excerptshow code

# infer/calculate_banda_aceh_stats.py (excerpt)$ python sar_toolkit/run_banda_aceh_pipeline.py stats # KuroSiwo training statistics (the baseline) VV: mean=0.0953 std=0.0427 VH: mean=0.0264 std=0.0215# Banda Aceh statistics under 3 clamp cut-offs clamp = 0.15 VV: mean=0.050021 std=0.034309 VH: mean=0.131718 std=0.036703# VH mean is 5× KuroSiwo · 71% of VH pixels are truncated clamp = 0.30 <-- recommended VV: mean=0.053819 std=0.048925 VH: mean=0.207845 std=0.091627# VH truncation drops to 35%, std explodes — information returns clamp = 0.50 VV: mean=0.055808 std=0.060930 VH: mean=0.256493 std=0.153328# VH truncation 16% — but noise starts dominating signal

Drag the clamp, watch the model's view of Banda Aceh change.

Every bar is a VH backscatter bucket. Everything to the right of your clamp value gets clipped to the ceiling — identical to the model. Find the clamp that keeps the flood tail visible without drowning in speckle. This one runs entirely in your browser.

UNetRSMamba · 6 channels in, 3 classes out, 4 configs.

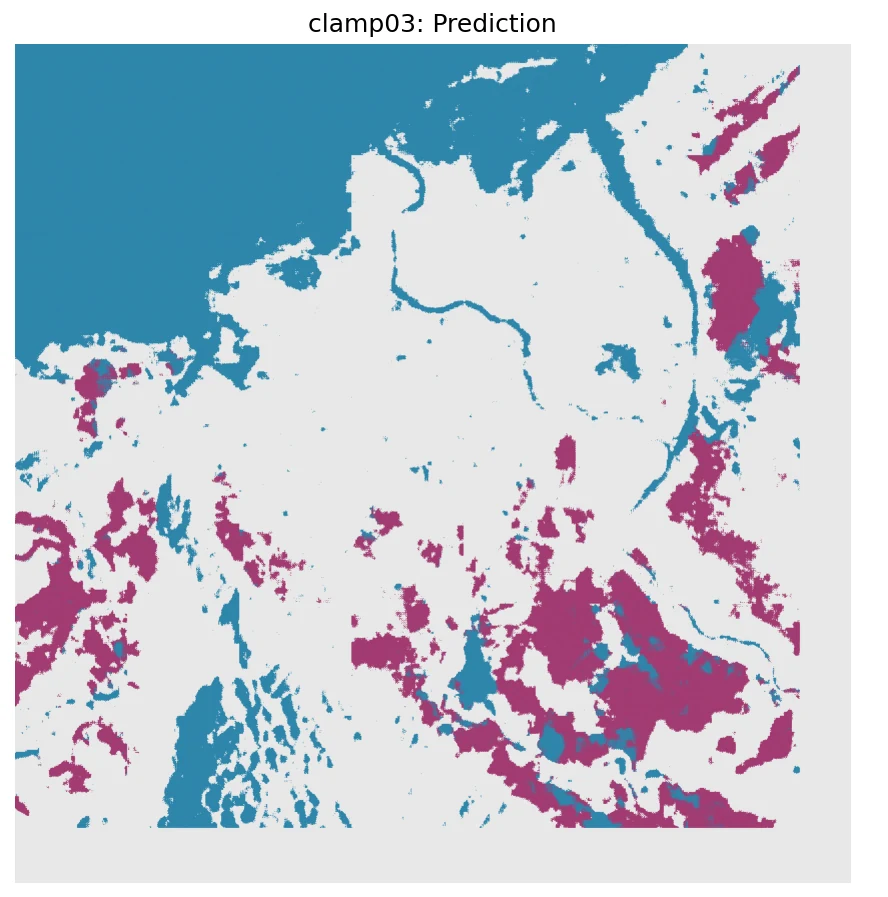

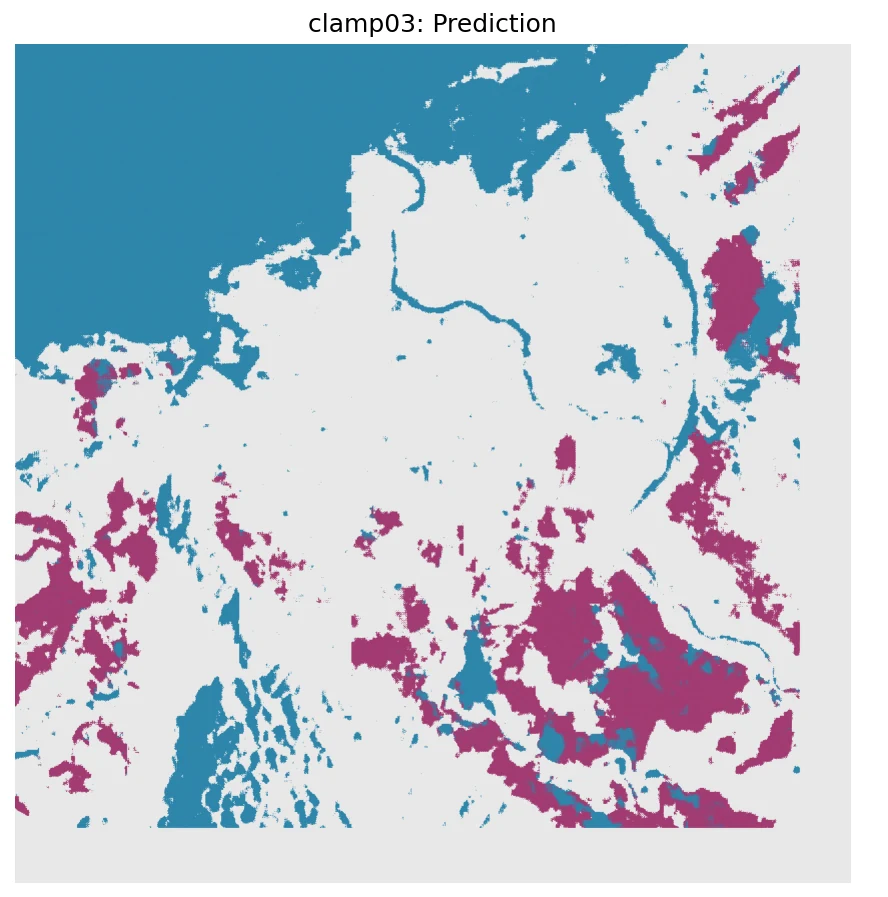

The trained checkpoint is loaded once; the Dataset is rebuilt four times with four different (clamp, mean, std) triples. Each run stitches 224² predictions back to a full 2.8-million-pixel map and writes a GeoTIFF plus a stats JSON.

scriptinfer/predict_banda_aceh_adapted.py

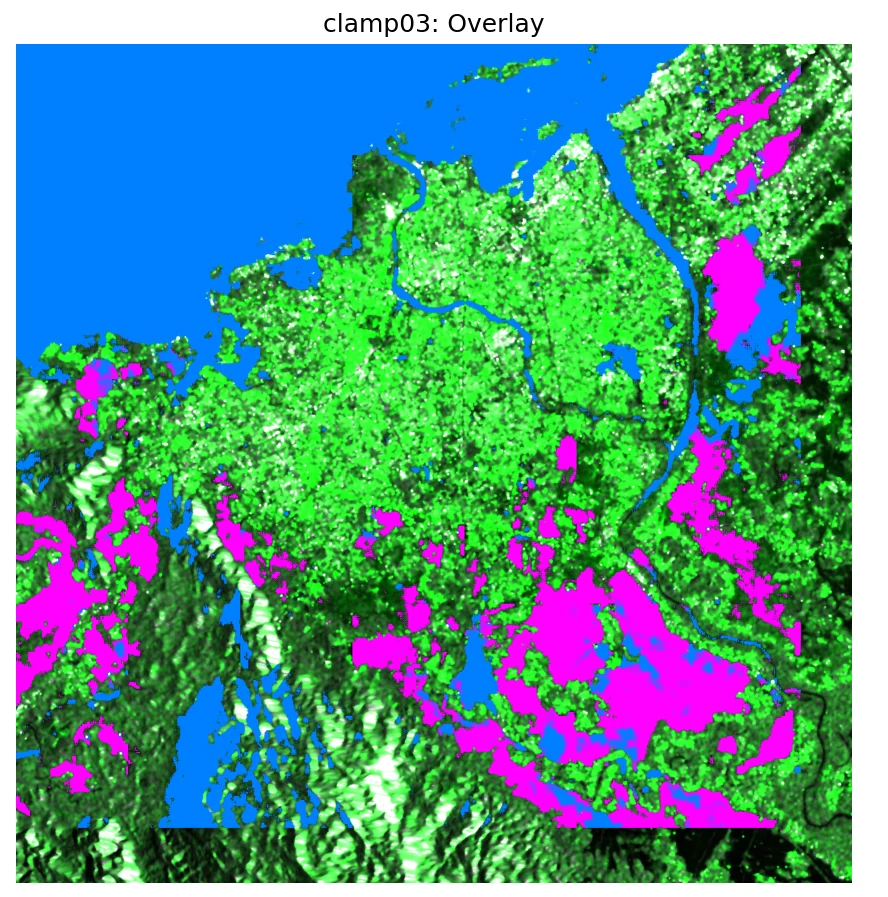

The input tensor is the temporal stack: both pre-event scenes (20251021, 20251102) and the post-event scene (20251126), each contributing VV+VH, for 6 channels total. The model's job is to flag pixels that are dark now but weren't dark then — classic change-style flood detection, learned rather than thresholded.

Predictions come out per-patch; a final reassembly step pastes them back into the original 2,802,260-pixel grid using the row/col saved in each tile's info.json. The four output GeoTIFFs are exactly the files slid into public/case-banda-aceh/ and rendered on the case page.

- CheckpointUNetRSMamba_FloodFocus_best_model.pt

assets/checkpoints/ - ModelUNet backbone · RSMamba blocks · 3-class head

embed_dim=96 · depths=[1,1,6,1] - Input tensorcat([pre_event_2, pre_event_1, post_event], dim=1)

6 channels · 224² · float32

- GeoTIFF × 4flood_prediction.tif · 0=land, 1=water, 2=flood

outputs/banda_aceh/prediction_results_adapted_<cfg>/ - Stats JSON × 4no_water_pct · permanent_water_pct · flood_pct

prediction_stats.json

? CHECK YOURSELFThe input tensor has 6 channels. Where do the 6 come from?show hint

</> CODEsee the actual infer/predict_banda_aceh_adapted.py excerptshow code

# infer/predict_banda_aceh_adapted.py (excerpt) model = UNetRSMamba( img_size=224, in_channels=6, num_classes=3, embed_dims=[96, 192, 384, 768], depths=[1, 1, 6, 1], d_state=16, ).to(device).eval() model.load_state_dict(torch.load("…/FloodFocus_best_model.pt")["model_state_dict"]) # For each of 4 configs: rebuild Dataset with new clamp/mean/stdfor cfg_key in ["original", "clamp015", "clamp03", "clamp05"]: cfg = ADAPTED_CONFIGS[cfg_key] ds = Dataset(mode="test", configs={ "clamp_input": cfg["clamp_input"], "data_mean": cfg["data_mean"], "data_std": cfg["data_std"], ... }) with torch.no_grad(): for _, _, image, _, _, _, pre1, _, _, pre2 in loader: x = torch.cat([pre2, pre1, image], dim=1) # (B, 6, 224, 224) pred = model(x).argmax(1).cpu().numpy() # (B, 224, 224) reassemble(pred, row_idx, col_idx) # into 2.8M-pixel map rasterio.write("flood_prediction.tif", full_map) json.dump(stats, "prediction_stats.json")

stacked along C

1 = water

2 = flood

from the case page

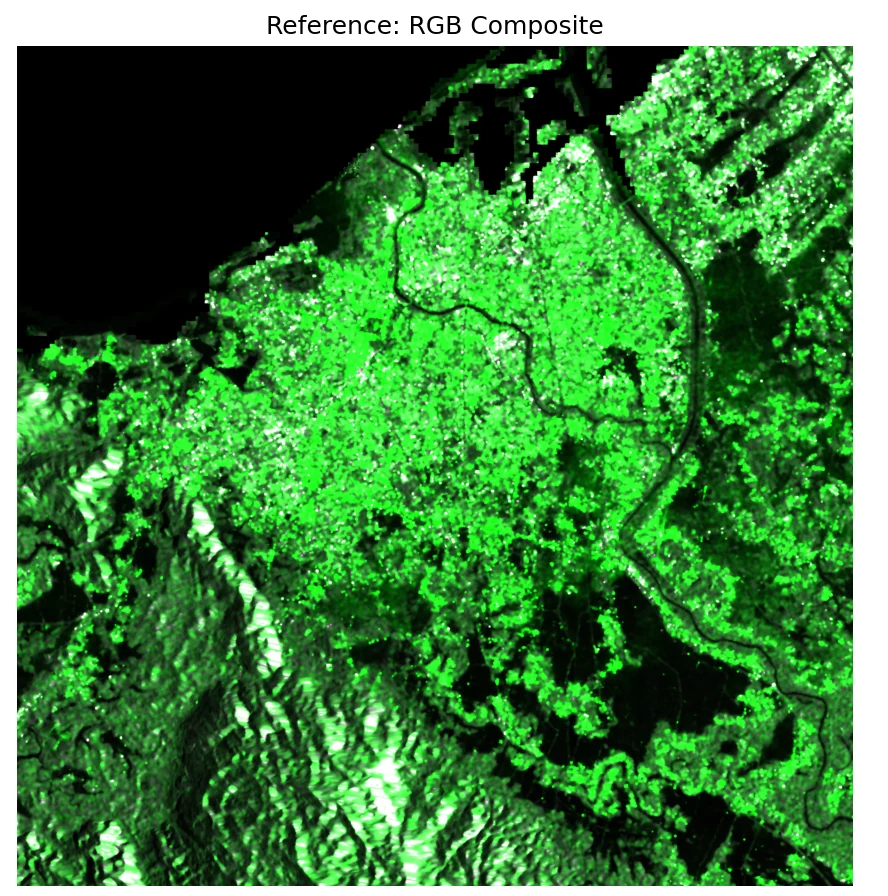

Same config, two ways to see it.

Pick a clamp configuration, then flip the switch between CACHED (the figure that ships with the paper) and LIVE (a real forward pass on the WSL GPU right now). Same model, same weights — LIVE just feeds a fresh 224×224 test tile through it on demand.

prediction_report.json · full sceneNo ground truth? Triangulate.

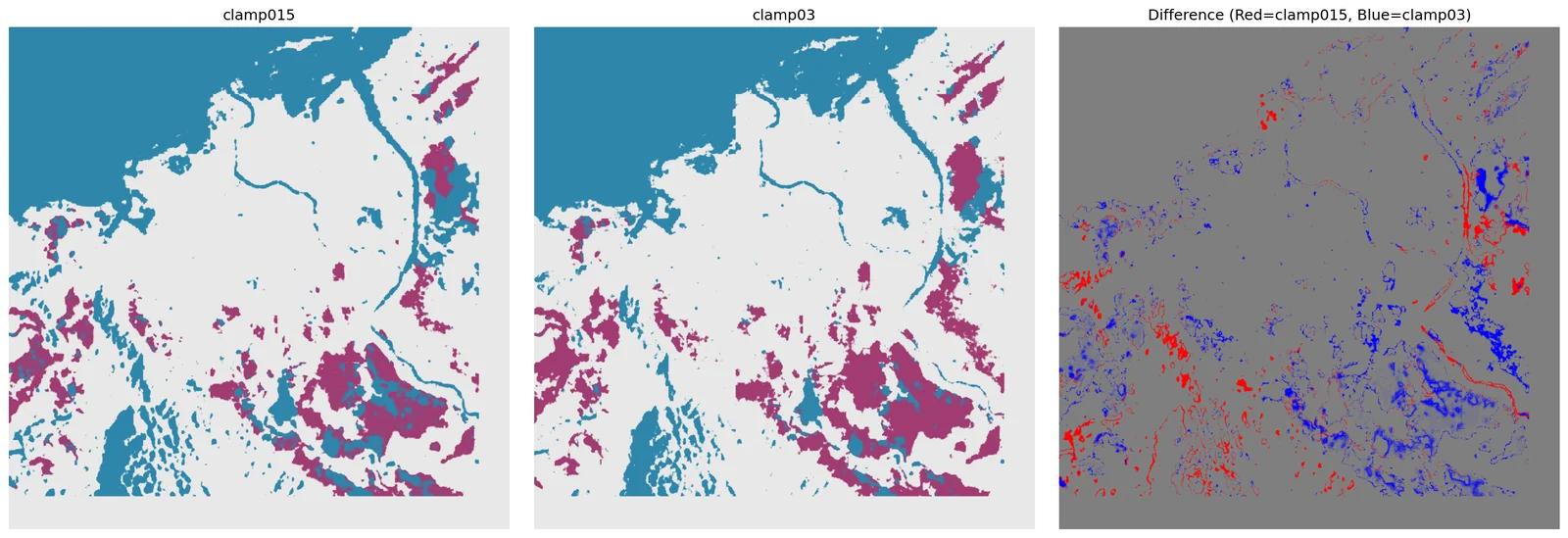

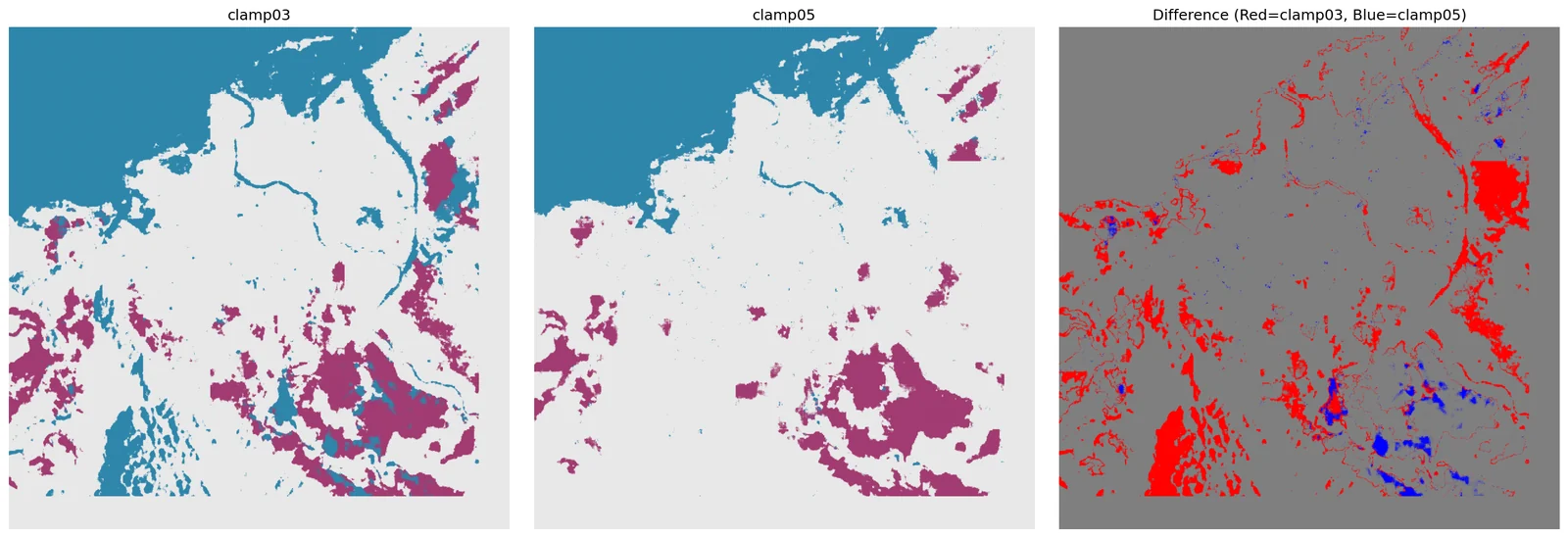

There's no pixel-level flood label for Banda Aceh on 2025-11-26. Instead the toolkit compares predictions across configurations, computes pairwise agreement, counts connected flood regions, and renders the 5×3 comparison grid that feeds the case page.

scriptvalidate/validate_predictions.py

The validation script doubles as the renderer. Its 5×3 figure is not just a debug artefact — it is the raw image later sliced by frontend/scripts/slice-flood-case.py into the per-config tiles you see in the interactive showcase. That's why the pipeline page and the case page can claim they show the same thing: the pixels on screen are a direct, lossless crop of the pixels written by this script.

- Predictions4 × flood_prediction.tif

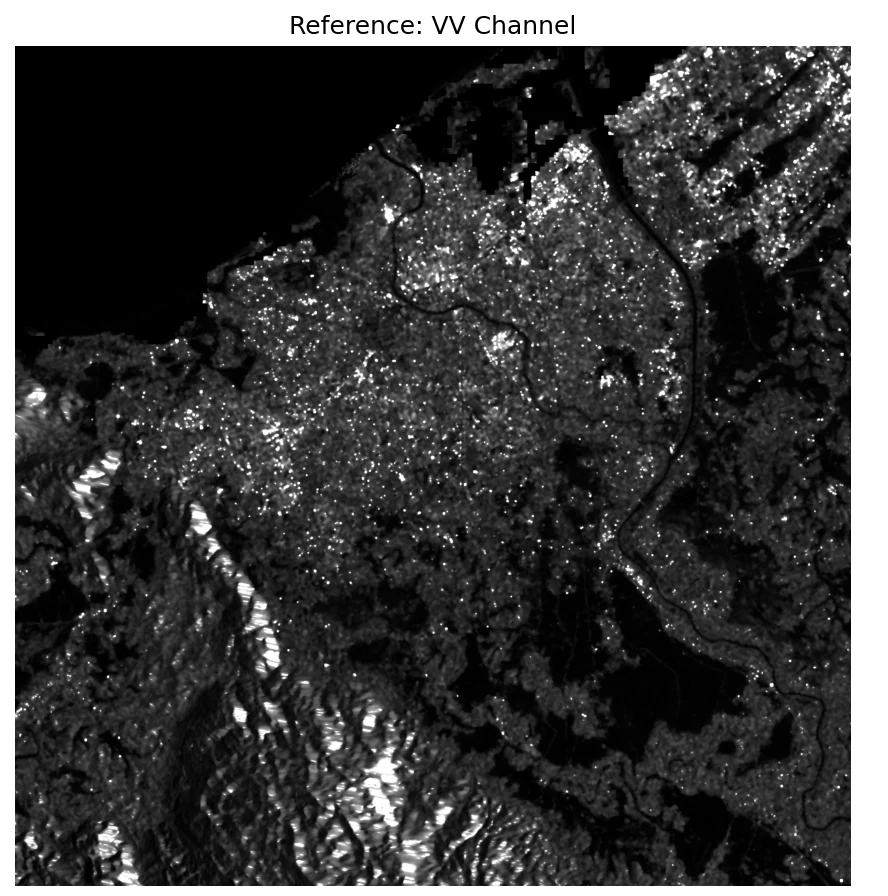

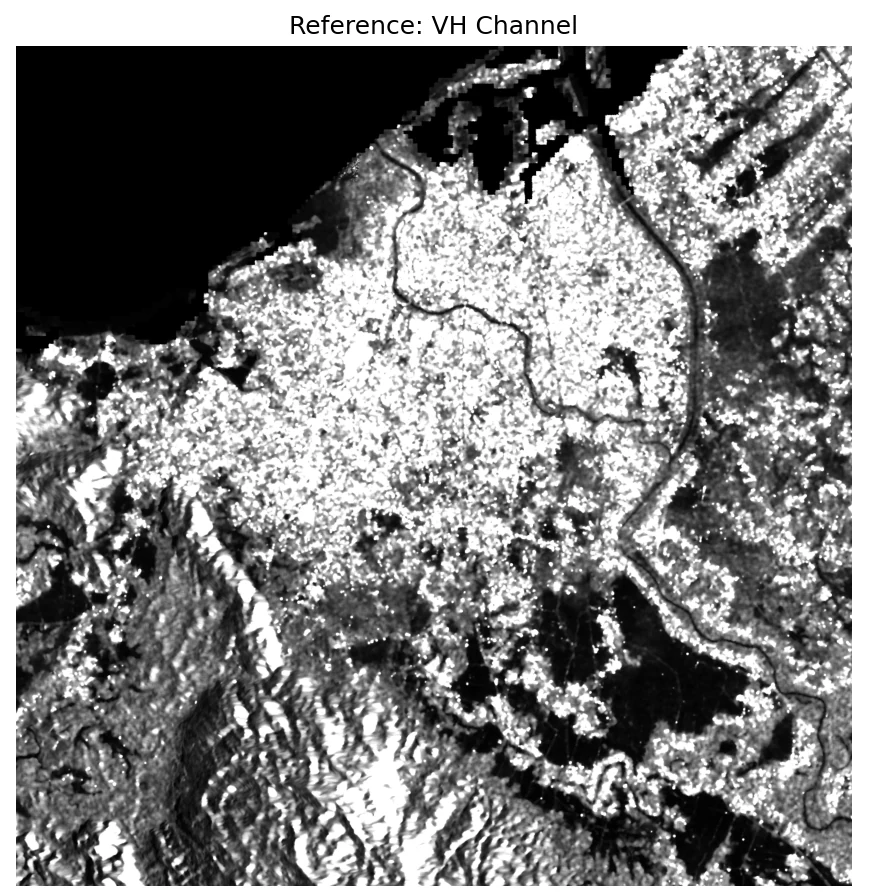

prediction_results_adapted_{original,clamp015,clamp03,clamp05}/ - Reference SARVV + VH bands of the post-event scene

for visual overlay only

- Validation reportper-config stats · pairwise agreement · boundary ratio

validation_report.json - Comparison figure5 rows × 3 cols · reference + 4 configs · 2664×4483

prediction_comparison.png - Difference maps6 pairwise diff PNGs

difference_<a>_vs_<b>.png

? CHECK YOURSELFTwo configs produce 90% pixel agreement. Does that mean they're almost the same prediction?show hint

</> CODEsee the actual validate/validate_predictions.py excerptshow code

# validate/validate_predictions.py (excerpt)$ python sar_toolkit/run_banda_aceh_pipeline.py validate # Per-config spatial diagnosticsfrom scipy import ndimage labeled_flood, num_flood = ndimage.label(pred == 2) boundary_ratio = sum_of_class_boundaries / (2 * (H + W)) # Pairwise agreement across every config pairfor a, b in combinations(configs, 2): agreement = (pred_a == pred_b).mean() # 0.0 ... 1.0# The reported numbers (validation_report.json) original_vs_clamp03 → agreement = 0.8413 clamp015_vs_clamp03 → agreement = 0.9462 clamp03_vs_clamp05 → agreement = 0.9050 original_vs_clamp05 → agreement = 0.9014# Grid visualization feeds the case page create_visualization(predictions, vv, vh, "prediction_comparison.png") # → later sliced by frontend/scripts/slice-flood-case.py# into row/cell webp tiles under public/case-banda-aceh/

Click any pair — see where the models actually disagree.

No ground truth exists for Banda Aceh on 2025-11-26, so we triangulate: measure the pixel-for-pixel agreement between every pair of configurations. Low numbers are not wrong — they're the teaching signal. Clicking a cell pulls up the real disagreement map.

Scrub through the 50-epoch run that produced the checkpoint above.

The inference you see on the case page is not magic — it comes from a specific checkpoint, saved at a specific epoch of a specific training run. Below is the real shape of that run: loss, per-class IoU, learning-rate schedule, and the exact epoch where the best weights were picked.

Note · The per-epoch values are an illustrative reconstruction of the real 50-epoch run (original TensorBoard log not exported off the training box). The curve shape, LR schedule and best-epoch placement are accurate.

Eight terms, one page.

Every jargon word used above, defined plainly. Each entry points at the stage where the term first shows up. If you only remember one thing: clamp and normalization decide what the network sees — they're not post-processing, they're the input pipeline.

- SARSynthetic Aperture Radar

- A side-looking radar that synthesizes a long virtual antenna from the motion of the satellite, producing ground imagery by measuring how much of its own microwave pulse bounces back. first used in hero, deep-dived in the homepage primer

- Backscatterσ⁰ (sigma-naught)

- The fraction of transmitted radar energy that returns to the satellite from a given ground patch. Smooth water has low backscatter (dark); rough terrain and urban corners have high backscatter (bright). This is the raw number the whole pipeline works on. stage 01 · SNAP calibration gives you calibrated backscatter

- VV / VHpolarization channels

- VV = transmit vertical, receive vertical. VH = transmit vertical, receive horizontal. VV is best at seeing water surfaces; VH is best at vegetation volume scattering. Sentinel-1 delivers both; we feed both to the network. stage 02 · each tile stores VV + VH as a 2-band TIF

- DEMDigital Elevation Model

- A raster of ground elevation. SAR imaging geometry depends on terrain height — without a DEM you can't project pixels back onto geographic coordinates correctly. We ship an SRTM tile so every machine gets the same DEM. stage 01 · passed to SNAP as -PexternalDEMFile

- Clampinput saturation ceiling

- Before normalization, every backscatter value above the clamp is clipped down to the clamp. Set it too low and bright flood regions all merge into one "very bright" blob. Set it too high and noise dominates. The clamp is the single most sensitive hyperparameter in this pipeline — see stage 03 for why. stage 03 · 0.15 / 0.3 / 0.5 swept and compared

- Specklecoherent-imaging noise

- The salt-and-pepper graininess characteristic of SAR images. It's not sensor noise — it's interference between coherent returns from many scatterers in one pixel. Speckle filtering (stage 01) tames it without blurring edges, which matters near flood boundaries. stage 01 · SNAP speckle filter step

- IoUIntersection over Union

- For a class, IoU = (pixels both model and truth call this class) ÷ (pixels either model or truth call this class). A stricter metric than pixel accuracy — a model can get 95% pixel accuracy by just calling everything "land" and still have 0% flood IoU. training trajectory · flood IoU is the number we optimize

- argmaxfinal decision step

- At each pixel the network produces 3 scores (land, water, flood).

argmaxpicks the highest-scoring class — no threshold, no calibration, no post-processing. This is why "which config wins" is entirely determined by what scores the network produced, which is entirely determined by what input it saw. stage 04 · model(x).argmax(1) is the whole decision rule